PhyEdit enables physical-grounded object manipulation in images editing — moving and editing objects in the 3D space with geometric accuracy and physical consistency.

Abstract

Achieving physically accurate object manipulation in image editing is essential for its potential applications in interactive world models. However, existing visual generative models often fail at precise spatial manipulation, resulting in incorrect scaling and positioning of objects. This limitation primarily stems from the lack of explicit mechanisms to incorporate 3D geometry and perspective projection. To achieve accurate manipulation, we develop PhyEdit, an image editing framework that leverages explicit geometric simulation as contextual 3D-aware visual guidance. By combining this plug-and-play 3D prior with joint 2D–3D supervision, our method effectively improves physical accuracy and manipulation consistency. To support this method and evaluate performance, we present a real-world dataset, RealManip-10K, for 3D-aware object manipulation featuring paired images and depth annotations. We also propose ManipEval, a benchmark with multi-dimensional metrics to evaluate 3D spatial control and geometric consistency. Extensive experiments show that our approach outperforms existing methods, including strong closed-source models, in both 3D geometric accuracy and manipulation consistency.

Demo

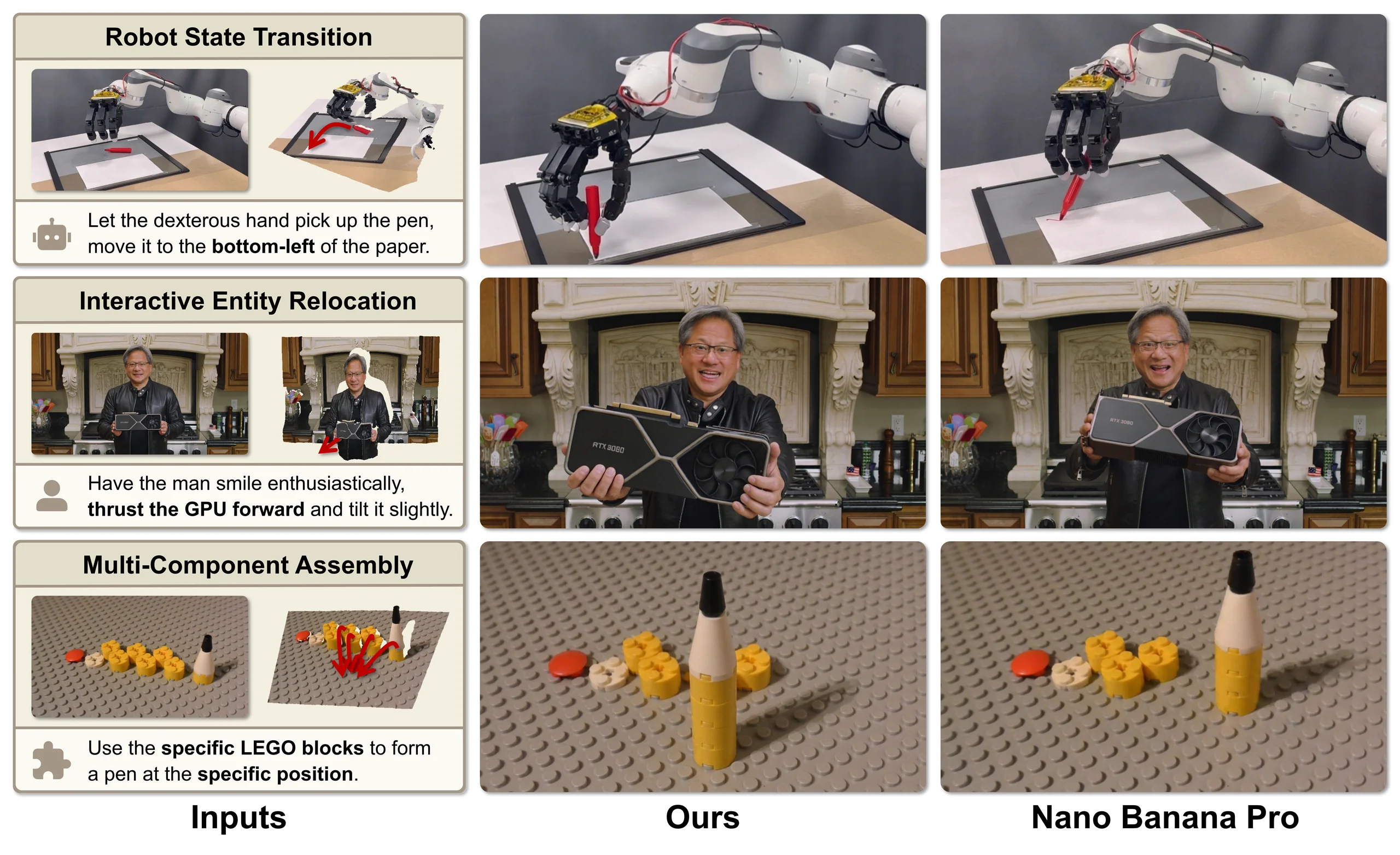

Quantitative results on ManipEval, comparing with strong closed-source models Qwen-Image-2.0-Pro and Nano Banana Pro. Correct and incorrect manipulations are marked in green and red dashed boxes, respectively.

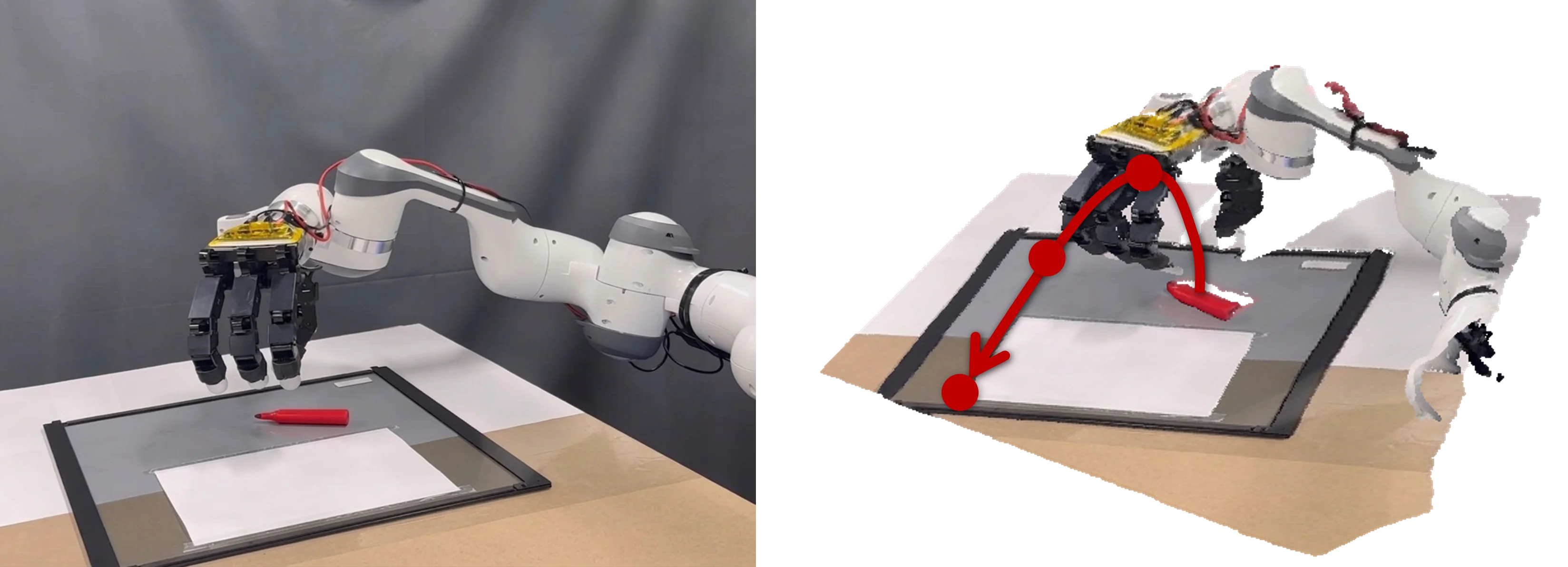

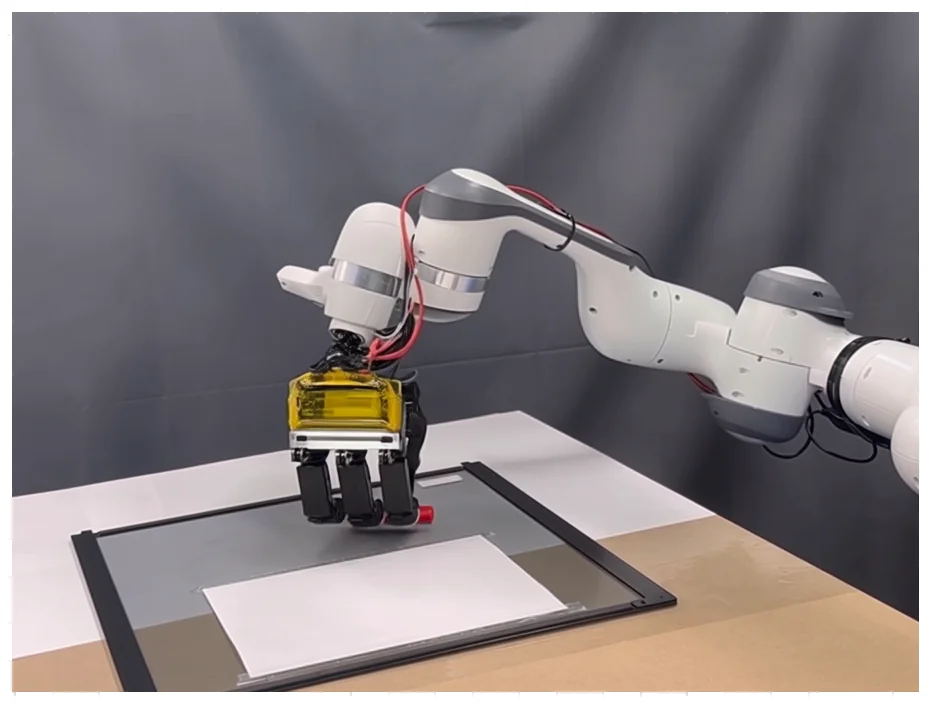

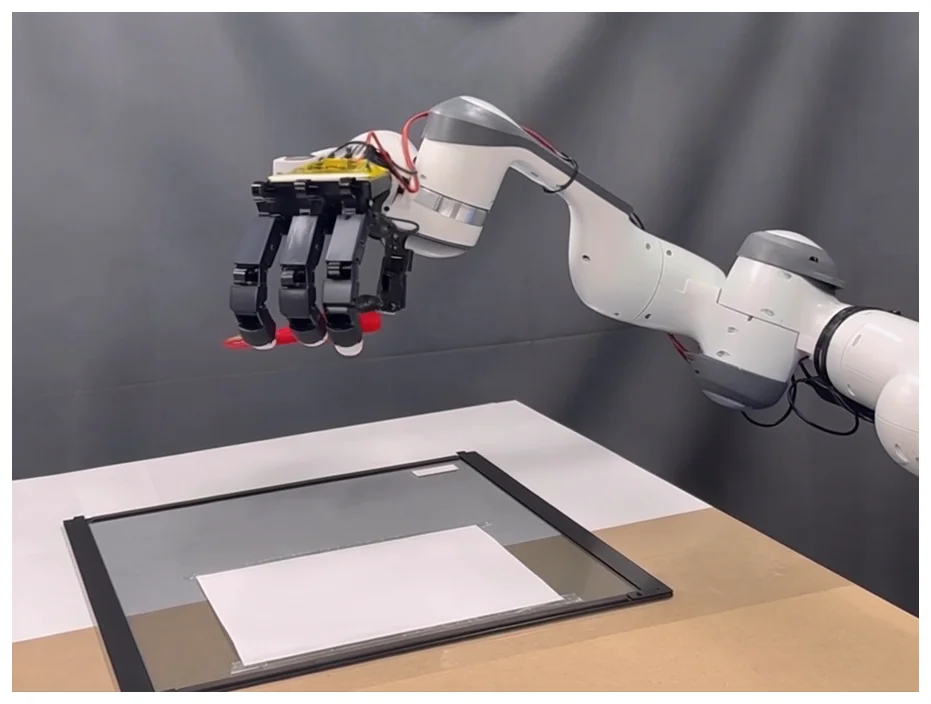

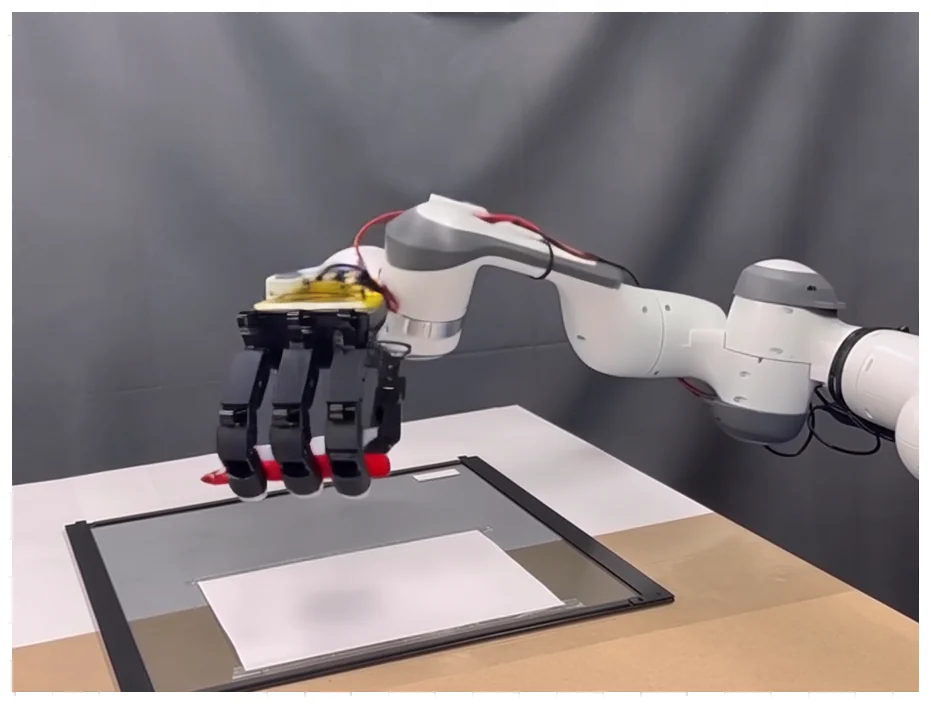

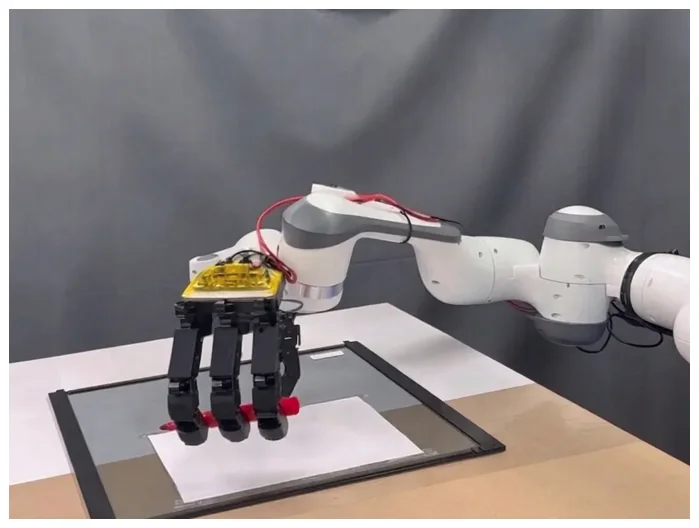

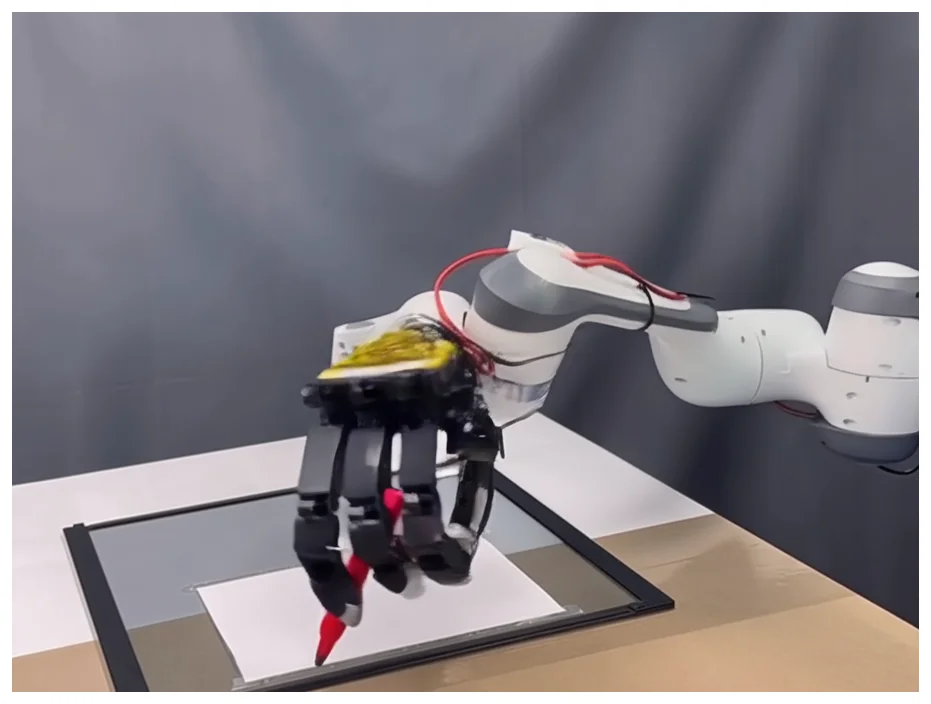

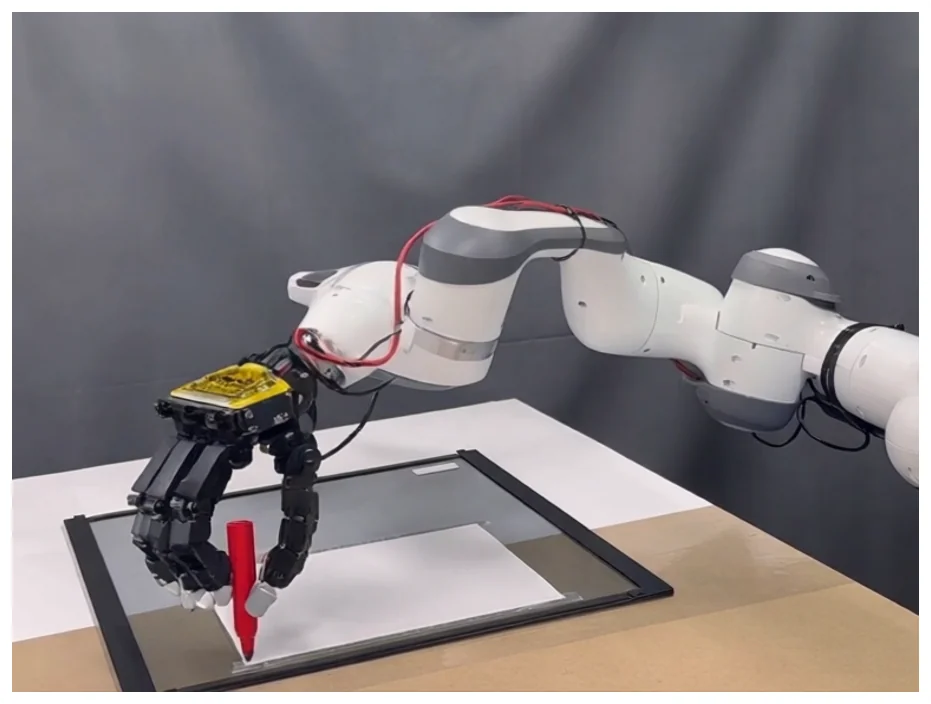

Continuous Object Manipulation

Beyond single-step editing, PhyEdit maintains geometric consistency under continuous state transitions. Given an initial state and a spatial trajectory, our model sequentially renders physically accurate keyframes that can be processed by video interpolation models to synthesize a continuous, physically consistent manipulation video. The robotic arm below is out of our training distribution, demonstrating strong generalization.

Continuous object manipulation along a trajectory. The first slide shows the initial state; subsequent slides are rendered keyframes. A video model interpolates intermediate frames to produce a smooth manipulation video.

Method

PhyEdit combines a DiT editing backbone with a 3D foundation model. The framework has three components: (1) a 3D transformation module that generates a depth-aware preview, (2) the DiT denoising backbone, and (3) a joint training loss in both 2D latent and 3D depth spaces.

3D Transformation Module

Given a source image , object mask , and transition vector , we predict depth and camera pose , then edit the object directly in 3D space:

The preview image serves as an additional condition for the DiT backbone, providing explicit geometric guidance and naturally supporting multi-object manipulation without iterative editing.

Joint Supervision

Latent-space denoising loss alone is insufficient for 3D manipulation, as it emphasizes appearance reconstruction over geometric correctness. We add a depth-space supervision term using the scale-invariant logarithmic (SILog) loss:

where balances latent and depth supervision. This is lightweight and plug-and-play — it can be added to different DiT-based editors with minimal modification.

RealManip-10K Dataset

We build RealManip-10K, a real-world dataset of paired images where objects are manipulated in 3D space, especially along the depth axis. Each pair provides depth maps, object masks, and representative 3D object coordinates.

The pipeline collects videos from OpenVid-1M, VIDGEN-1M, PE-Video-Dataset, and object-tracking datasets (LaSOT, GoT-10K, TrackingNet). A novel camera token clustering approach using DBSCAN ensures near-static camera conditions without relying on optical flow or hand-crafted feature matching.

Experiments

Quantitative Results on ManipEval

| Method | DIoU ↑ | Mask IoU ↑ | AbsRel ↓ | δ₁.₂₅ ↑ | Chamfer ↓ | Centroid ↓ | RA-DINO ↑ | DeQA ↑ | Phys-VLM ↑ |

|---|---|---|---|---|---|---|---|---|---|

| GeoDiffuser | 51.72 | 20.10 | 67.64 | 31.40 | 73.41 | 64.56 | 14.78 | 56.72 | 54.62 |

| DiffusionHandles | 56.22 | 18.88 | 57.36 | 31.73 | 73.00 | 59.65 | 17.08 | 61.83 | 46.90 |

| Move-and-Act | 46.89 | 10.97 | 68.47 | 29.30 | 64.59 | 63.62 | 13.01 | 60.79 | 60.85 |

| OBJect 3DIT | 46.63 | 10.18 | 66.93 | 32.75 | 62.30 | 64.14 | 14.31 | 48.01 | 66.65 |

| GoodDrag | 51.07 | 13.29 | 69.60 | 34.19 | 51.27 | 58.39 | 20.85 | 74.08 | 85.08 |

| PixelMan | 49.48 | 11.49 | 66.46 | 35.10 | 50.18 | 57.13 | 21.17 | 72.79 | 73.60 |

| Qwen-Image-Edit | 53.28 | 13.80 | 64.63 | 40.61 | 46.31 | 52.48 | 26.09 | 75.67 | 90.55 |

| LightningDrag | 53.81 | 18.90 | 57.07 | 35.99 | 45.76 | 53.57 | 21.92 | 73.60 | 88.60 |

| ChronoEdit | 48.92 | 9.26 | 65.80 | 36.35 | 45.02 | 54.40 | 21.73 | 75.29 | 92.15 |

| GPT-Image-1.5† | 52.33 | 11.34 | 70.95 | 36.50 | 39.79 | 52.78 | 23.80 | 75.82 | 88.68 |

| Qwen-Image-2.0-Pro† | 56.48 | 15.60 | 55.93 | 41.39 | 29.64 | 43.48 | 31.04 | 68.26 | 82.91 |

| Nano Banana Pro† | 59.97 | 18.93 | 55.02 | 46.11 | 25.33 | 35.62 | 34.77 | 77.48 | 91.06 |

| Ours | 65.33 | 27.20 | 49.53 | 51.08 | 18.93 | 32.12 | 36.91 | 75.48 | 93.72 |

† Proprietary commercial model. All metrics normalized to [0, 100].

Our method achieves the best overall performance across manipulation-related metrics, outperforming strong closed-source commercial systems on geometry-sensitive measures. Against Nano Banana Pro: DIoU +5.36, Chamfer distance −6.40, RA-DINO +2.14. Our lead over commercial baselines grows further on multi-object scenes (Chamfer gap: 5.19 → 6.58; δ₁.₂₅ gap: 1.91 → 8.02).

BibTeX

@misc{xu2026phyeditrealworldobjectmanipulation, title={PhyEdit: Towards Real-World Object Manipulation via Physically-Grounded Image Editing}, author={Ruihang Xu and Dewei Zhou and Xiaolong Shen and Fan Ma and Yi Yang}, year={2026}, eprint={2604.07230}, archivePrefix={arXiv}, primaryClass={cs.CV}, url={https://arxiv.org/abs/2604.07230},}