ContextGen uses user-provided reference images to generate images with multiple instances, offering precise layout control while guaranteeing identity preservation.

Abstract

Multi-instance image generation (MIG) remains a significant challenge for modern diffusion models due to key limitations in achieving precise control over object layout and preserving the identity of multiple distinct subjects. To address these limitations, we introduce ContextGen, a novel Diffusion Transformer framework for multi-instance generation based on contextual learning guided by both layout and reference images. Our approach integrates two key technical contributions: a Contextual Layout Anchoring (CLA) mechanism that incorporates the composite layout image into the generation context to robustly anchor the objects in their desired positions, and Identity Consistency Attention (ICA), an innovative attention mechanism which leverages contextual reference images to ensure the identity consistency of multiple instances. Recognizing the lack of large-scale, hierarchically-structured datasets for this task, we introduce IMIG-100K, the first dataset with detailed layout and identity annotations. Extensive experiments demonstrate that ContextGen sets a new state-of-the-art, outperforming existing methods in control precision, identity fidelity, and overall visual quality.

Method

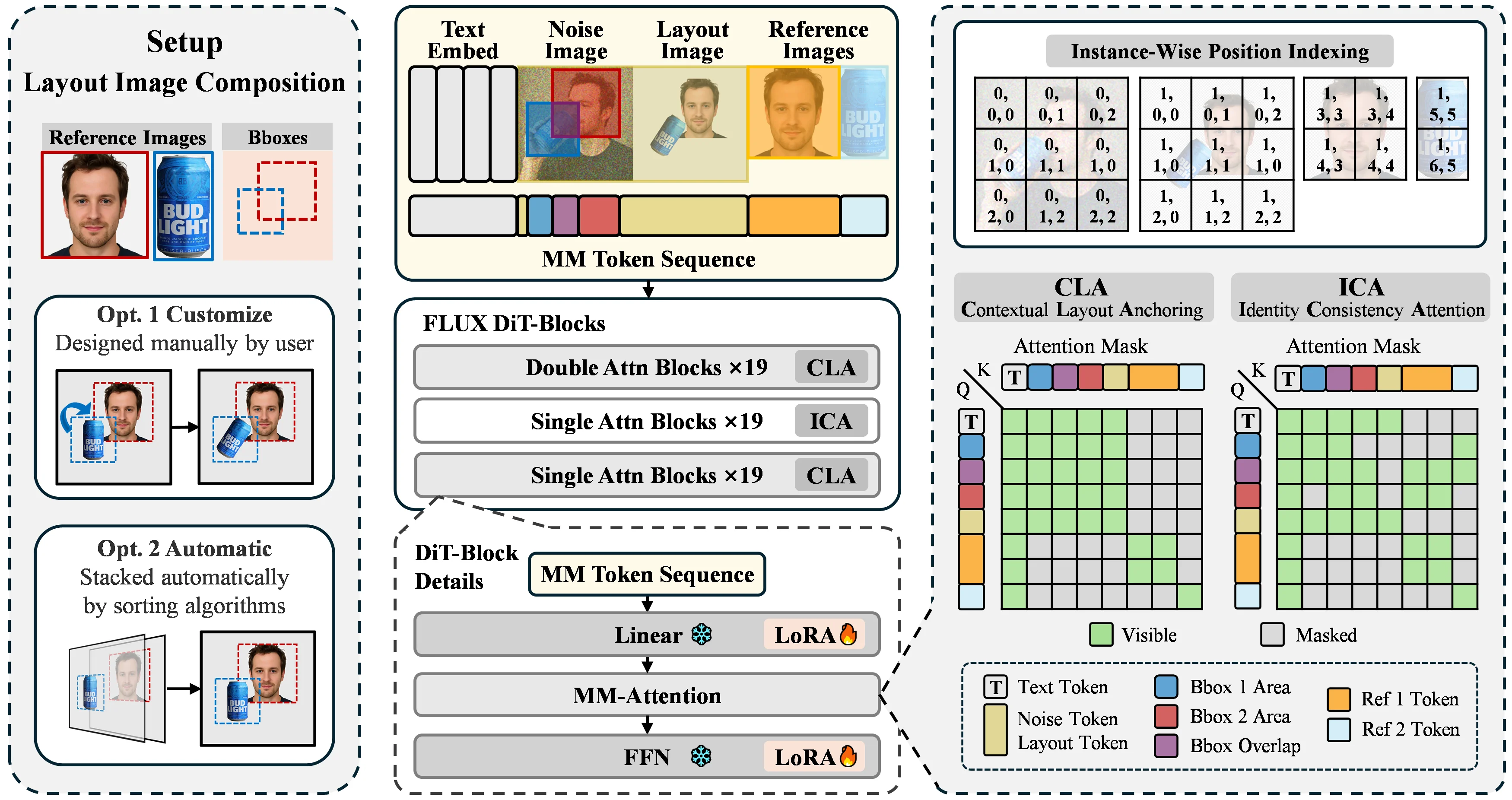

A composite layout image is used for precise spatial control — either user-provided or automatically synthesized. Reference images overcome the limitations of layout-only generation, such as instance information loss due to overlaps and dimensional compression. Two key innovations drive the framework:

- Contextual Layout Anchoring (CLA) leverages contextual learning to anchor each instance at its desired position by incorporating the layout image into the generation context, achieving robust layout control.

- Identity Consistency Attention (ICA) propagates fine-grained information from contextual reference images to their respective desired locations, preserving the detailed identity of multiple instances.

An enhanced position indexing strategy systematically organizes and differentiates multi-image relationships.

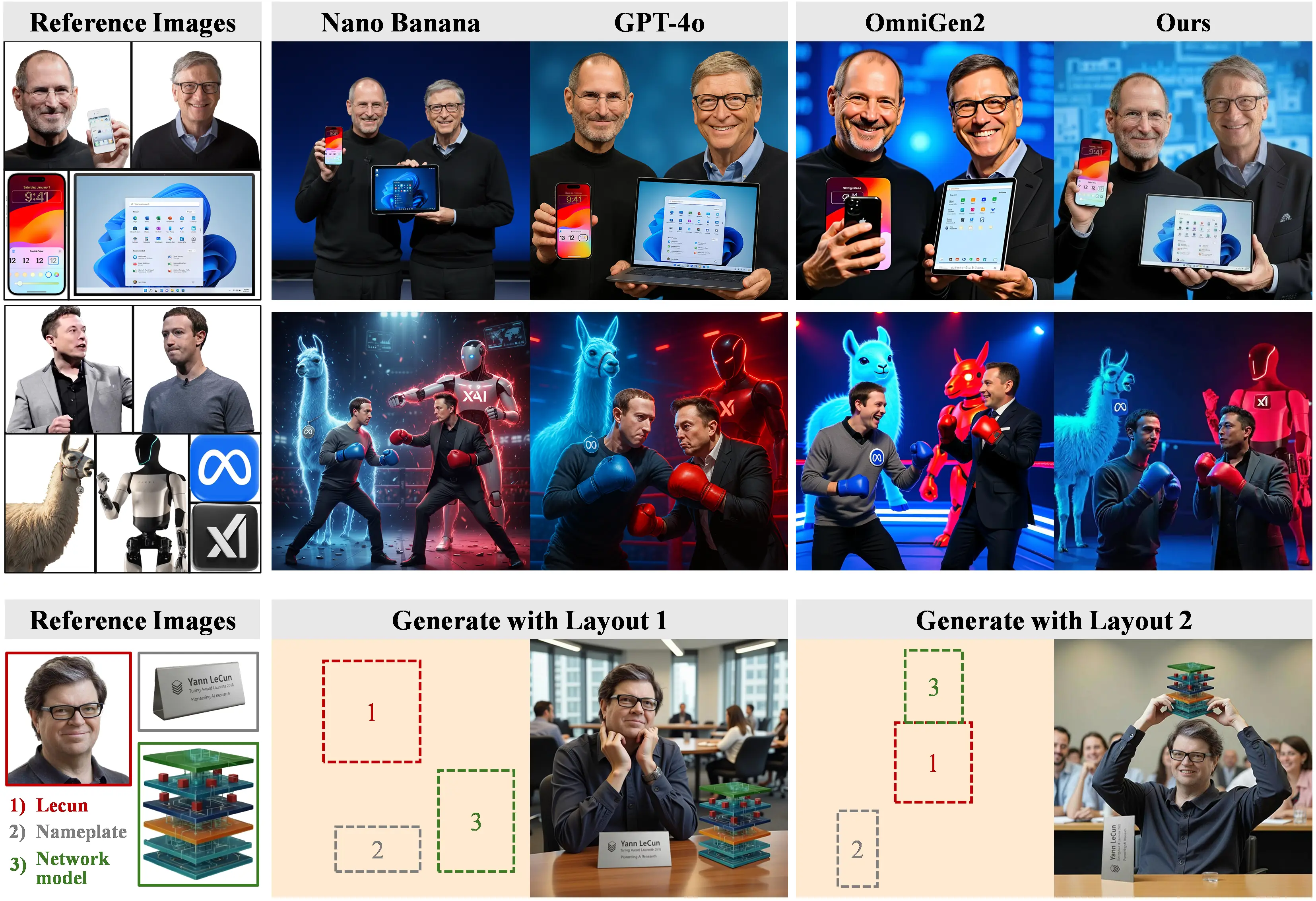

Identity-Consistent Subject-Driven Generation

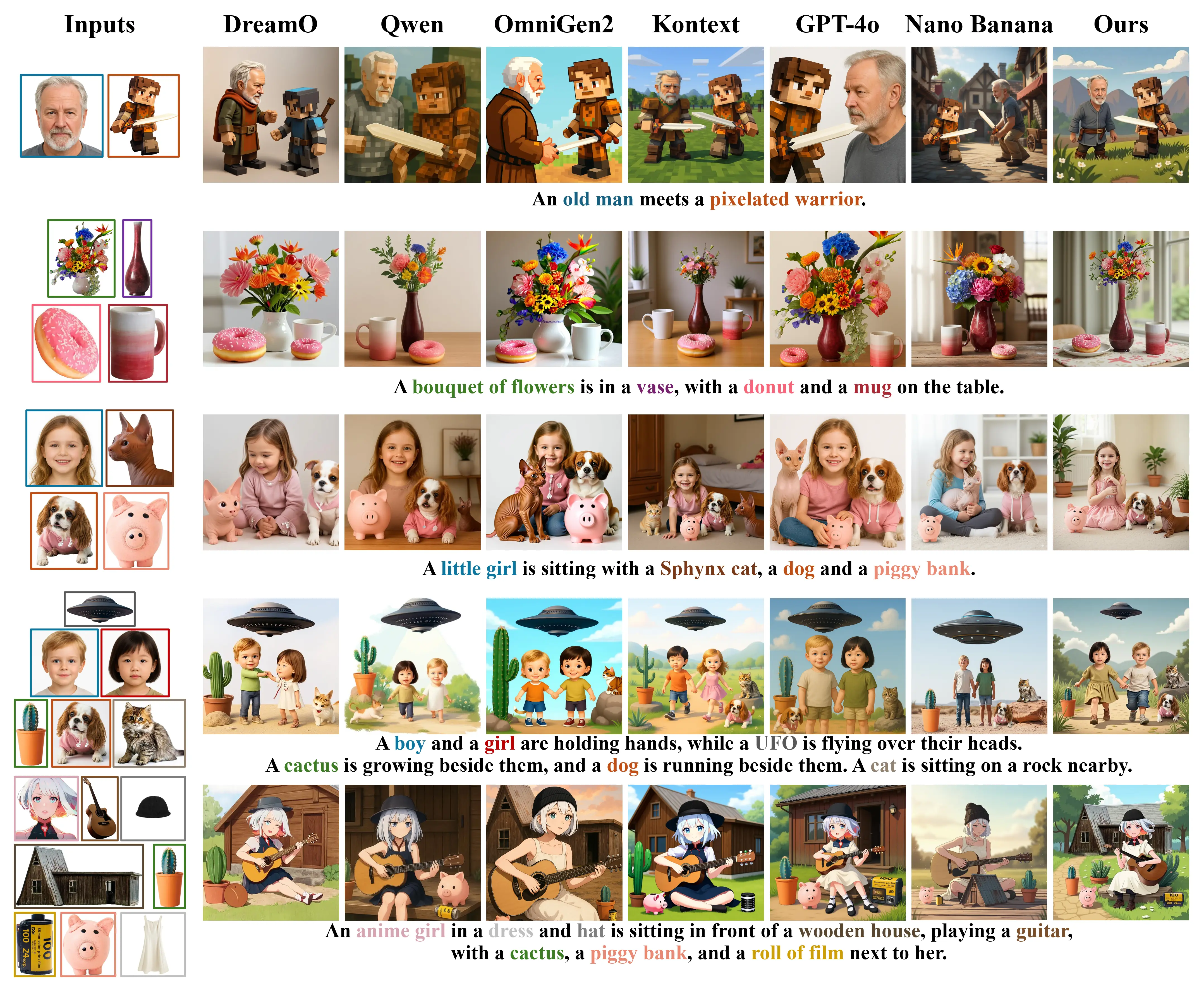

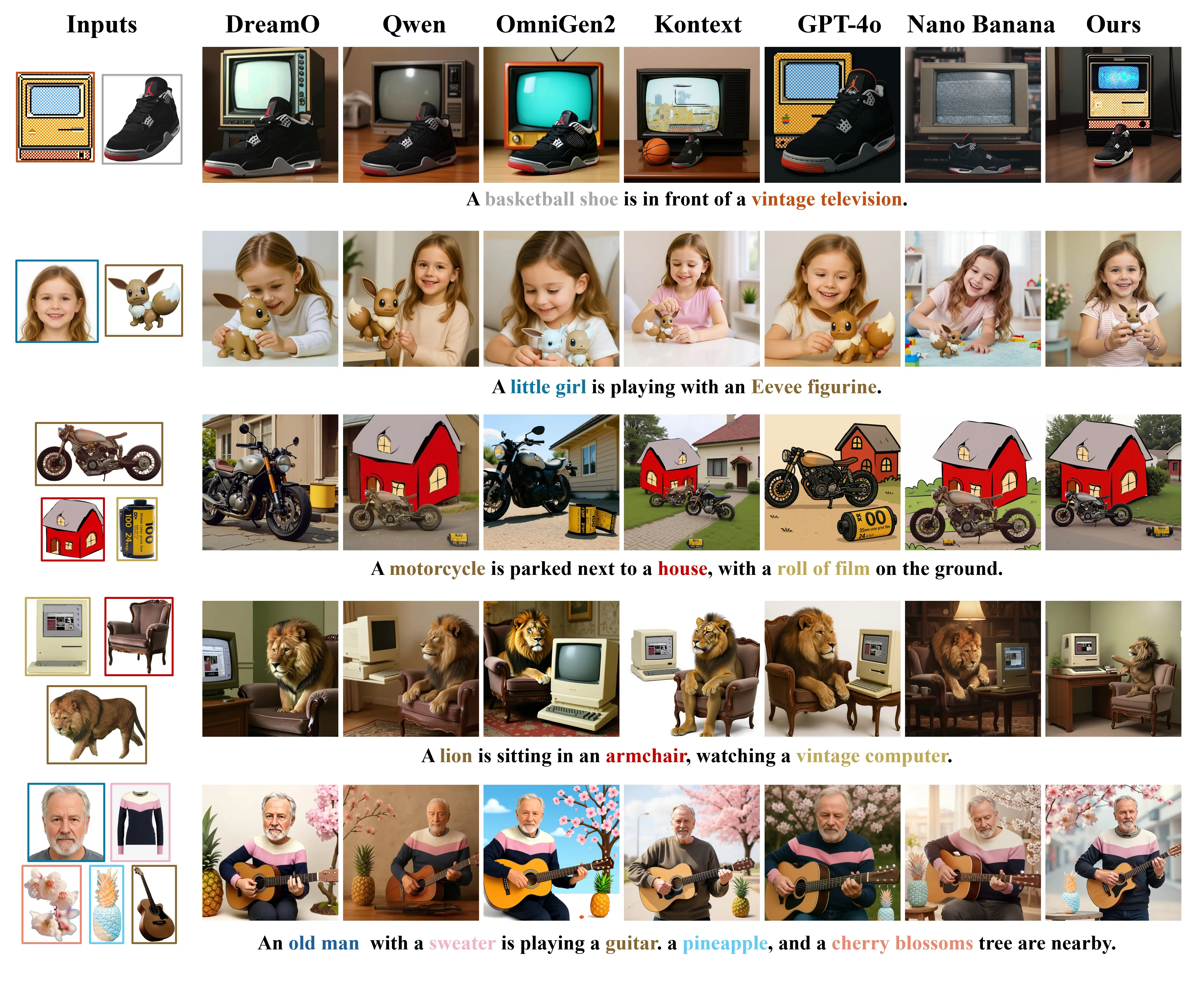

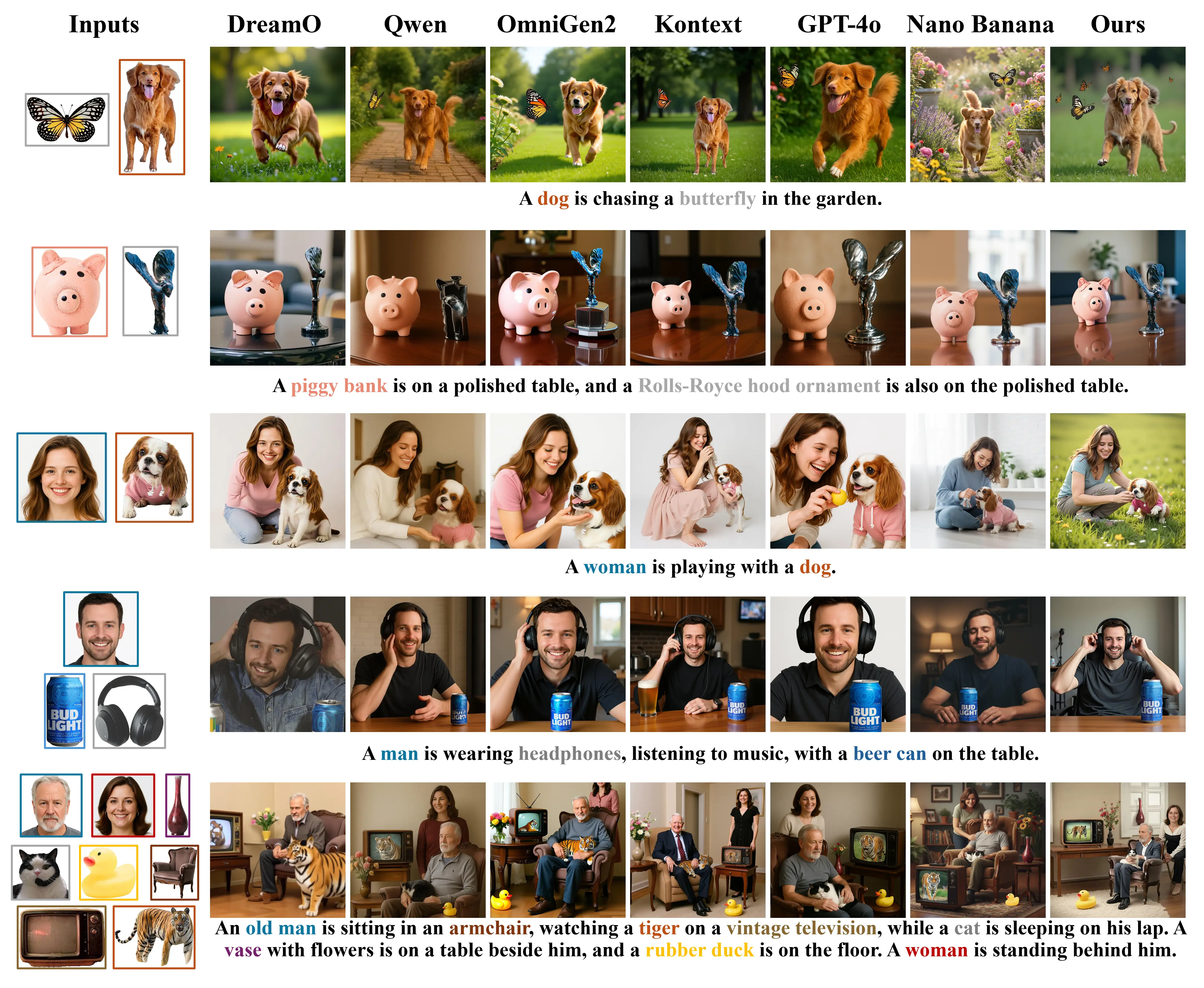

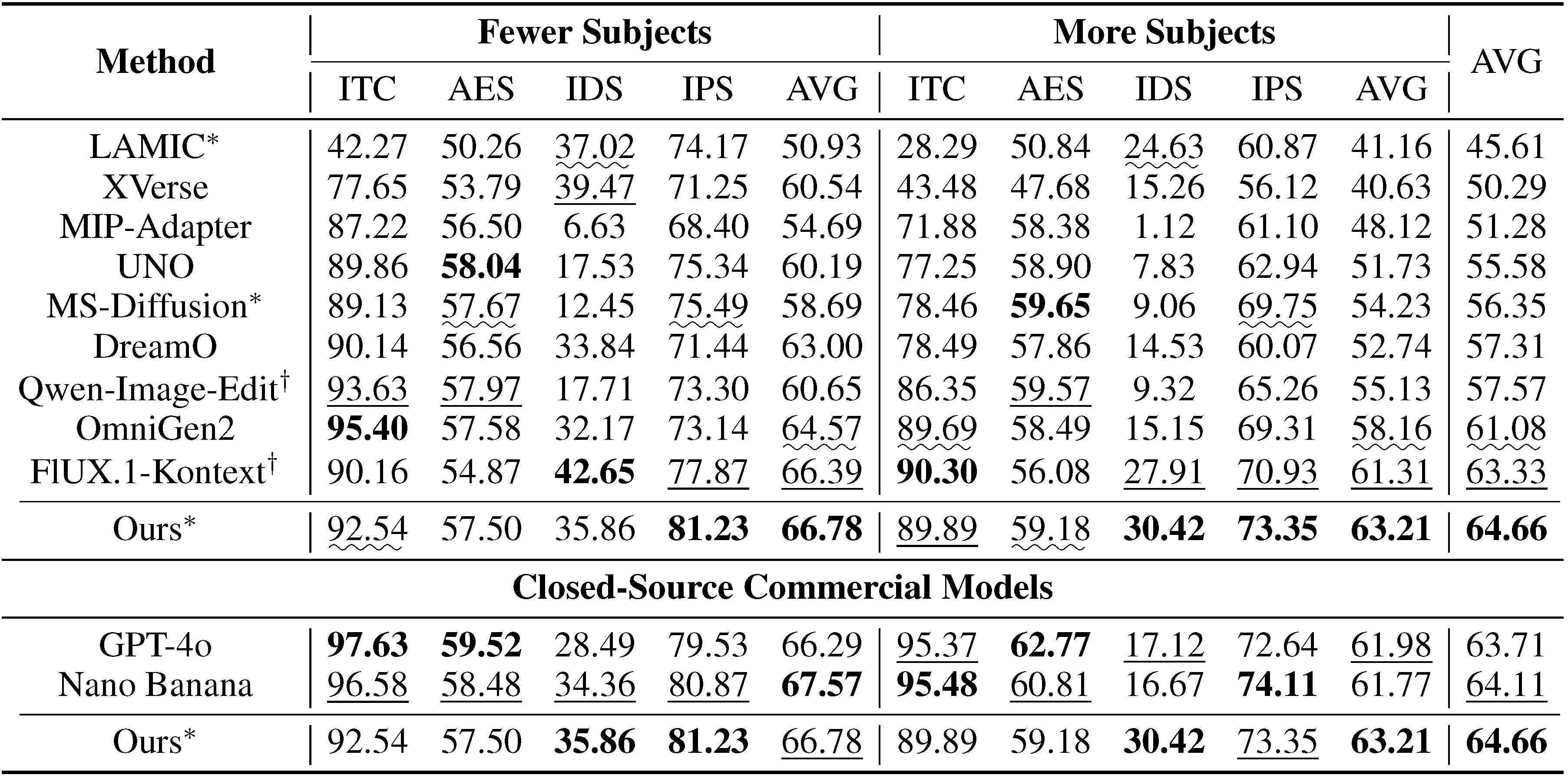

DEMO on LAMICBench++ comparing with existing open-source SOTA on subject-driven generation and closed-source commercial models.

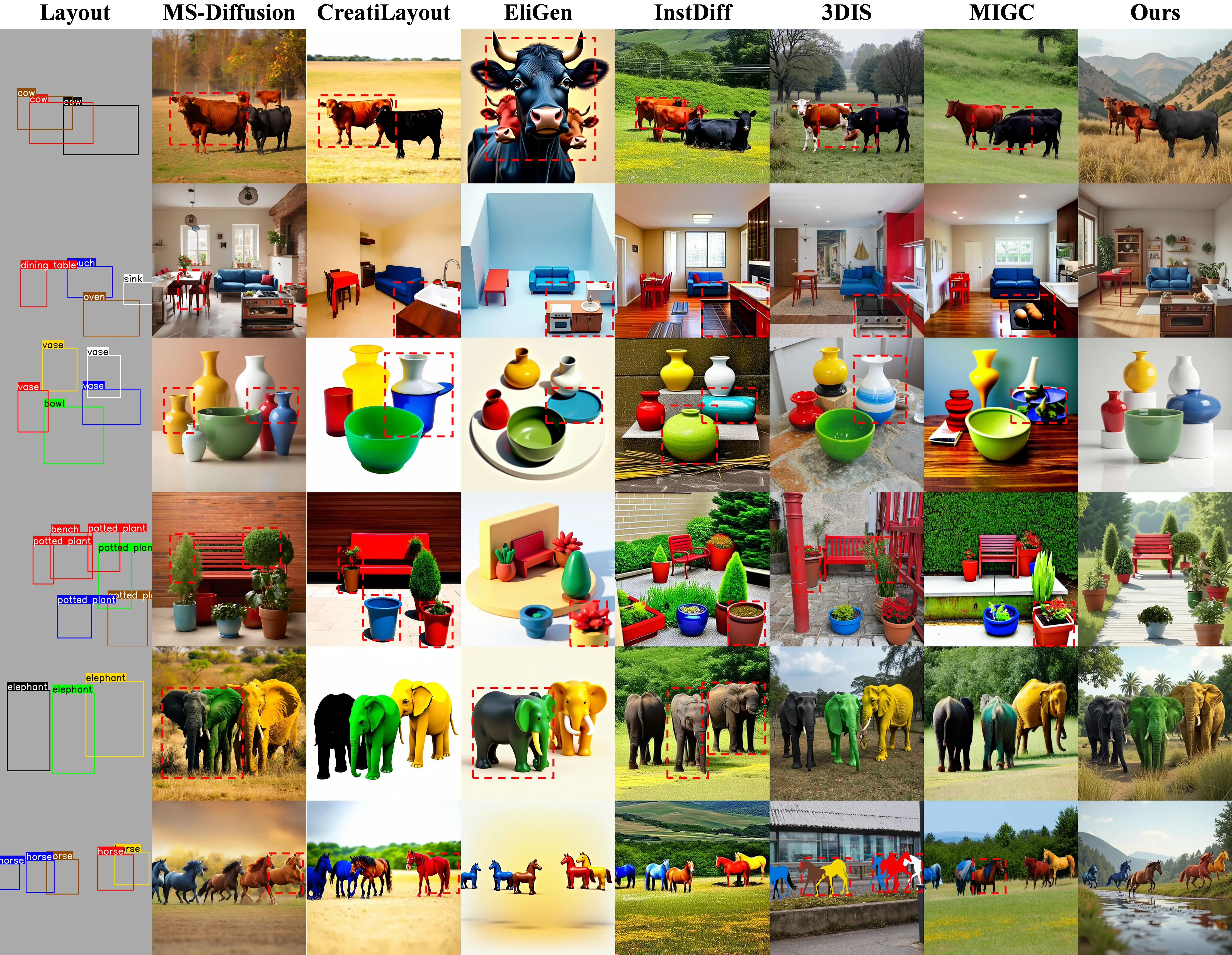

Precise Layout Control for Multi-Instance Scenes

IMIG-100K Dataset

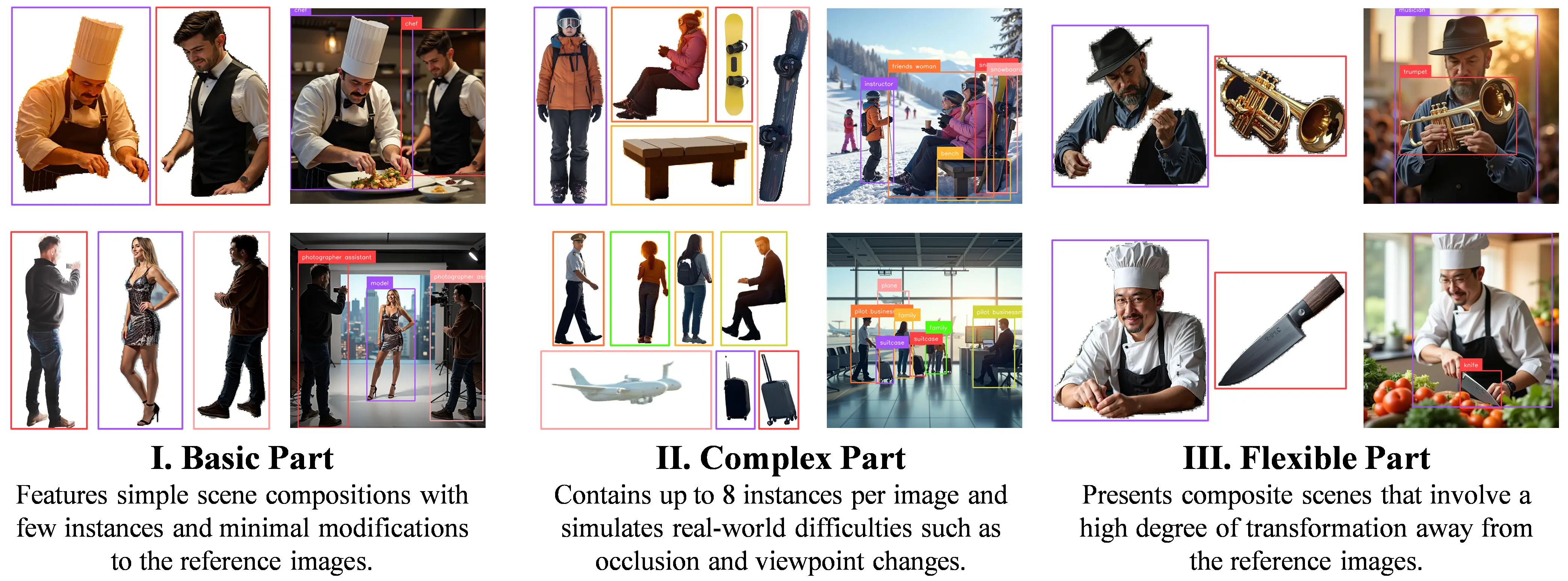

IMIG-100K is a large-scale, structured dataset designed for identity-consistent multi-instance generation. It is organized into three progressive difficulty levels to cover a wide range of real-world scenarios.

Basic Instance Composition — foundational scenes with layout and identity annotations.

Complex Instance Interaction — up to 8 instances per image with references covering occlusion, viewpoint rotation, and pose changes.

Flexible Composition with References — instances are composited into scenes with significant appearance variation relative to their references, training the model to handle flexible identity transformations.

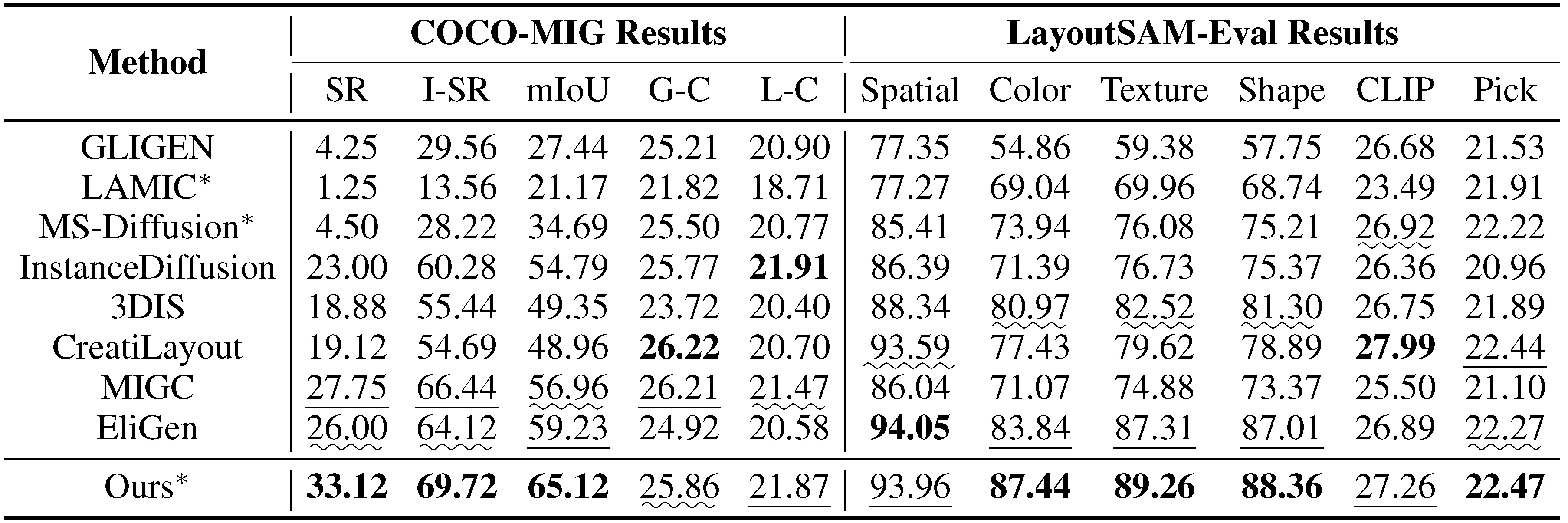

Quantitative Results

Interactive GUI

ContextGen comes with an intuitive GUI — upload reference images, draw bounding boxes, and generate, all without writing any code.

The ContextGen GUI demo — drag, drop, and generate with full layout and identity control.

BibTeX

@inproceedings{ xu2026contextgen, title={ContextGen: Contextual Layout Anchoring for Identity-Consistent Multi-Instance Generation}, author={Ruihang Xu and Dewei Zhou and Fan Ma and Yi Yang}, booktitle={The Fourteenth International Conference on Learning Representations}, year={2026}}